Analysis and post-processing

(page under construction)

This page collects information on existing tools which can be used to analyse data produced using the Einstein Toolkit. See also Visualization of simulation results.

Contents

SimulationTools

| Homepage | simulationtools.org |

|---|---|

| Authors | Ian Hinder and Barry Wardell |

| License | GPLv3 |

| Requirements | Mathematica (proprietary) |

| Other info | Documentation, unit tests (automatic on commit) |

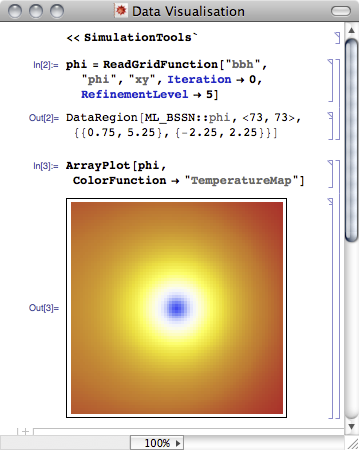

SimulationTools provides a functional interface to simulation data which deals transparently with merging output from different segments, and can read data from several different thorns, depending on what is available. The package supports reading Carpet HDF5 data and doing the required component-merging etc. so that you can do analysis on the resulting data in Mathematica. This supports 1D, 2D and 3D data, but is essentially dimension-agnostic. It has a component called SimulationOverview which displays a panel summarising a BBH simulation, including run speed and memory usage as a function of coordinate time, BH trajectories, separation, waveforms, etc. SimulationTools is coupled to a C++ replacement for the Mathematica HDF5 reader.

SimulationTools is available under the GPL and is developed on BitBucket, and would be a good candidate for including in the Einstein Toolkit at some point.

A more detailed overview of the features in SimulationTools is available.

PostCactus

| Homepage | https://github.com/wokast/PyCactus |

|---|---|

| Authors | Wolfgang Kastaun |

| License | GPLv3 |

| Requirements | Python 2.7, HDF5, H5Py, NumPy, SciPy |

| Other info | Wiki |

This package contains modules to read and represent various CACTUS data formats in Python, and some utilities for data analysis. In detail,

- simdir is an abstraction of one or more CACTUS output directories, allowing access to data of various type by variable name, and seamlessly combining data split over several directories.

- cactus_grid_h5 reads 1,2 and 3D data from CACTUS hdf5 datasets.

- cactus_grid_omni uses whatever data source it finds, e.g. cut a 3D file to get xy plane data

- grid_data represents simple and mesh refinened datsets, common arithmetic operations on them, as well as interpolation.

- cactus_scalars reads CACTUS 0D/Reductions ASCII files representing timeseries.

- cactus_gwsignal reads GW data

- cactus_multipoles reads multipole decomposition data (ASCII)

- timeseries represents timeseries and provides resampling numerical differentiation.

- fourier_util performs FFT on timeseries and searches for peaks.

- unitconv performs unit conversion with focus on geometric units, including predefined CACTUS, PIZZA, and CGS systems.

yt

| Homepage | yt project |

|---|---|

| Authors | many |

| License | BSD 3-clause license |

| Requirements | all in one install script |

| Other info | Documentation |

Mostly a visualization toolkit with basic support to read Cactus data, however can do some data manipulation. The current version of the Einstein Toolkit frontend is available in Kacper Krowalik's fork of yt. It is a work in progress and highly experimental. Therefore many datasets will not be read in correctly. To attain it:

hg clone ssh://hg@bitbucket.org/xarthisius/yt cd yt hg bookmark cactus python setup.py build_ext -i

if this works, you installed yt. Now just add $(pwd) to your PYTHONPATH.

Some example scripts for using and extending yt (and using the Einstein Toolkit frontend) can be found here.

PyCactus

| Homepage | https://bitbucket.org/knarrff/pycactus |

|---|---|

| Authors | Roberto De Pietri, Francesco Maione, Frank Löffler |

| License | GPL |

| Requirements | Python |

PyCactus are tools currently primarily developed to read Carpet ASCII and HDF5 data (especially 0D/1D) for usage in python and plotting using matplotlib. Documentation is essentially non-existent at this point, but the scripts are not that long and for the most part should be understandable.

rugutils

| Homepage | https://bitbucket.org/fguercilena/rugutils not working realastro fork of Guercilena's code |

|---|---|

| Authors | Federico Guercilena |

| License | GPLv3 |

| Requirements | Python 3, NumPy, Matplotlib, h5py, HDF5 |

A minimalist set of tools to read and plot Carpet data, with an equally minimalist set of dependencies, written in Python 3. The tools allow to read and plot 0D, Scalar, 1D data in ASCII format and 2D data in HDF5 format. The focus of these tools is to allow quickly looking at large data sets in a hassle-free way, without worrying too much about producing beautiful, publication ready figures. The main piece of software is the "rug" script, with four subcommands (rope, freeze, snake and window) to deal with various kinds of data. Quite a lot of documentation is available on the command line by typing: 'rug doc command' where command is one of the available command names.

scidata

| Homepage | https://bitbucket.org/dradice/scidata |

|---|---|

| Authors | David Radice |

| License | GPLv3 |

| Requirements | Python 3, NumPy, h5py |

Simple, high-performance, Cactus/Carpet data reader for python-2.7. It serves as the underpinning of pyGraph.

pyGWAnalysis

| Homepage | https://svn.einsteintoolkit.org/pyGWAnalysis/ |

|---|---|

| Authors | Christian Reisswig |

| License | MIT |

| Requirements | Python 2.7, NumPy |

A simple set of GW analysis tools in python. Can be made to read Multipole date when using h5py for example in pyGWAnalysis/Examples/integrate-modes-ffi.py:

t = dataset[:,0]

RePsi4 = dataset[:,1]

ImPsi4 = dataset[:,2]

WF[l][m] = WaveFunction(t, RePsi4, ImPsi4)

# WF[l][m].Load("rPsi4_l"+str(l)+"m"+str(m)+".dat")

POWER

| Homepage | https://git.ncsa.illinois.edu/elihu/Gravitational_Waveform_Extractor/ |

|---|---|

| Authors | Daniel Johnson, E. A. Huerta, Roland Haas |

| License | NCSA |

| Requirements | Python 2.7, NumPy,h5py |

A simple set of GW analysis tools in python that computes strain from Psi4 using fixed-frequency integration and extrapolates to scrI+. Can be made to read Multipole date when using h5py.

./power.py simulations/J0040_N40

SkyNet

SkyNet is a general-purpose nuclear reaction network that was specifically designed for r-process nucleosynthesis calculations, but SkyNet is also applicable to other astrophysical scenarios.

| Homepage | https://jonaslippuner.com/research/skynet/ |

|---|---|

| Authors | Jonas Lippuner, Luke Roberts |

| License | BSD 3-Clause License |

| Requirements | cmake, hdf5, gsl, boost |

Kuibit

kuibit is a Python library to analyze simulations performed with the Einstein Toolkit largely inspired by Wolfgang Kastaun's PostCactus. kuibit can read simulation data and represent it with high-level classes. kuibit comes with a number of scripts that can be immediately used (e.g., to make plots, extract gravitational waves).

Partial list of features (for a full list, see documentation):

- Read and organize simulation data. Checkpoints and restarts are handled transparently.

- Work with scalar data as produced by CarpetIOASCII).

- Analyze the multipolar decompositions output by Multipoles .

- Analyze gravitational waves extracted with the Newman-Penrose formalism computing, among the other things, strains, overlaps, energy lost.

- Work with the power spectral densities of known detectors.

- Represent and manipulate time series. Examples of functions available for time series: integrate, derive, resample, to_FrequencySeries (Fourier transform).

- Represent and manipulate frequency series, like Fourier transforms of time series. Inverse Fourier transform is available.

- Manipulate and analyze gravitational-waves. For example, compute energies, mismatches, or extrapolate waves to infinity.

- Work with 1D, 2D, and 3D grid functions as output by CarpetIOHDF5 or CarpetIOASCII.

- Work with horizon data from as output by QuasiLocalMeasures and AHFinderDirect.

- Handle unit conversion, in particular from geometrized to physical.

- Write command-line scripts.

- Visualize data with matplotlib.

| Homepage | https://github.com/Sbozzolo/kuibit |

|---|---|

| Authors | Gabriele Bozzola |

| License | GPLv3 |

| Requirements | Python 3.6, packages listed in poetry.lock |

| Documentation | Documentation, Tutorials, Examples |

| Other Info | Available on PyPI, same design as PostCactus in Wolfgang Kastaun's PyCactus, Unit tests (automatic on commit) |

watpy

The CoRe python package for the analysis of gravitational waves based on scidata, Matlab's WAT and pyGWAnalysis

Requirements

The Python Waveform Analysis Tools are compatible with Python3 (Old versions of watpy were Python2 compatible).

Features

- Classes for multipolar waveform data

- Classes to work with the CoRe database

- Gravitational-wave energy and angular momentum calculation routines

- Psi4-to-h via FFI or time integral routines

- Waveform alignment and phasing routines

- Waveform interpolation routines

- Waveform's spectra calculation

- Richardson extrapolation

- Wave objects contain already information on merger quantities (time, frequency)

- Unit conversion package

- Compatible file formats: BAM, Cactus (WhiskyTHC / FreeTHC), CoRe database

| Homepage | https://git.tpi.uni-jena.de/core/watpy |

|---|---|

| Authors | Andrea Endrizzi, Sebastiano Bernuzzi, and others |

| License | unknown |

| Requirements | Python 3.X, packages listed on https://git.tpi.uni-jena.de/core/watpy |

| Documentation | examples |

| Other Info | Install via pip install git+https://git.tpi.uni-jena.de/core/watpy

|

MayaWaves

Mayawaves is a python library for the analysis of numerical relativity simulations of binary black holes performed with the Einstein Toolkit or provided through the MAYA public waveform catalog.

| Homepage | https://mayawaves.github.io/mayawaves/ |

|---|---|

| Authors | Deborah Ferguson |

| License | GPL v3 |

| Documentation | ReadTheDocs |

| Requirements | Python 3.X, X<<=11, NumPy |

| Other Info | Install via pip install mayawaves

|

aurel

Aurel is an open source Python package for numerical relativity analysis. Designed with ease of use in mind, it will automatically calculate relativistic terms.

| Homepage | https://robynlm.github.io/aurel/ |

|---|---|

| Authors | Robyn Munoz |

| License | GPL v3 |

| Citation | bibtex |

| Documentation | github.io |

| Requirements | Python 3.11, pip |

| Other Info | Install via pip install aurel

|